VR/Flight Sim Testbed

We designed the Virtual Reality (VR) flight simulator testbed to estimate a pilots' cognitive load in real-time through physiological sensors

Published on 1/10/2022

VR/Flight Sim Testbed

Piloting fighter aircrafts and performing mid-air maneuvers under high G's are complex multi-tasking and cognitively demanding activities requiring working memory to satisfy task demands. Hence simulating such resource-intensive VR scenarios and accurately predicting the pilots' cognitive state is of utmost importance.

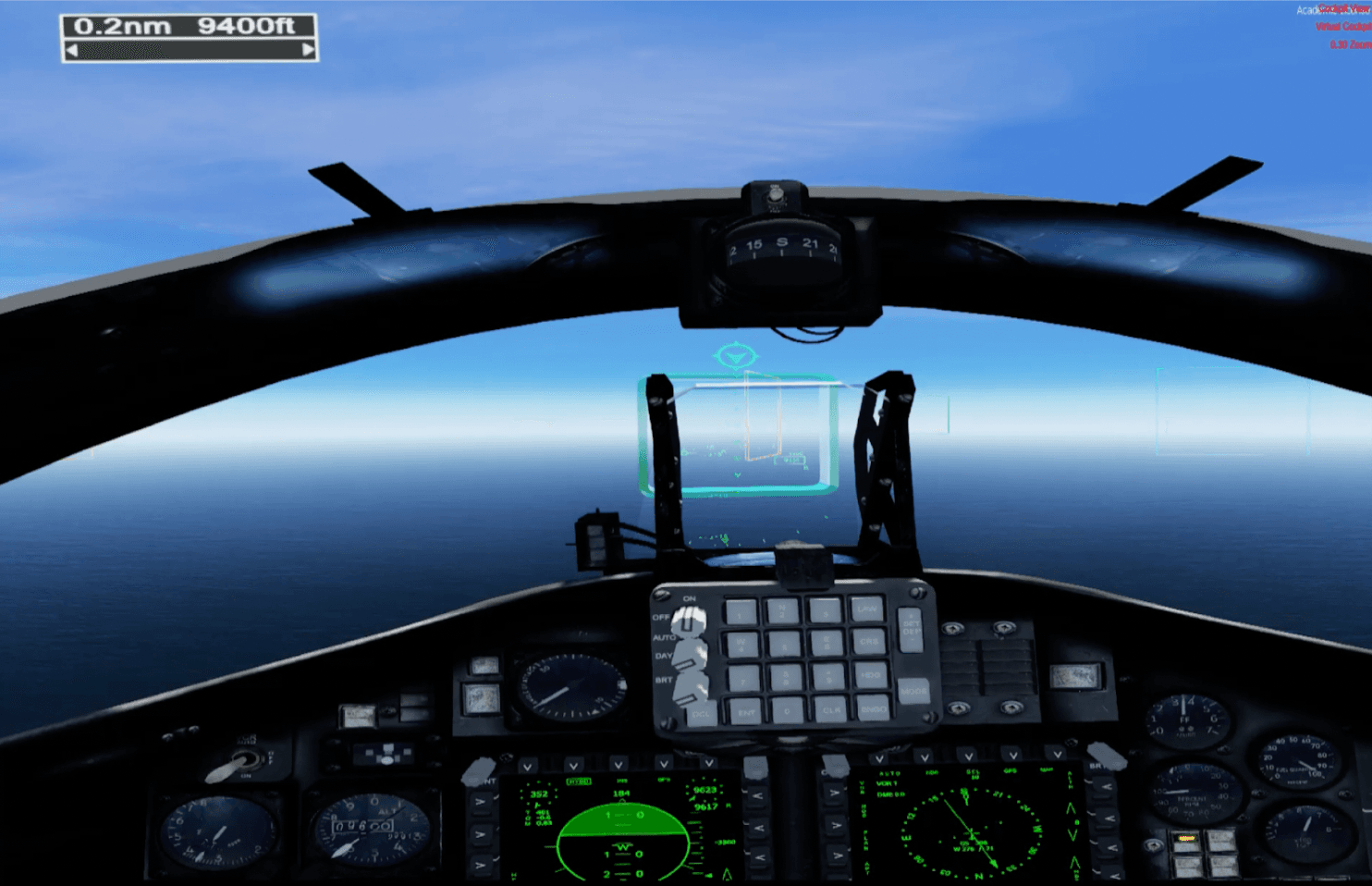

We designed the Virtual Reality (VR) flight simulator testbed to estimate a pilots' cognitive load in real-time through physiological sensors. The testbed is designed in Lockheed Martin's Prepar3d flight simulator, which entails performing six flying tasks of increasing complexity in succession. The first task involves flying in a straight line path while passing through virtual gates placed at regular intervals. The second task is identical but involves minor turns between the virtual gates. The third task involves performing 180-degree turns between gates, and the fourth and fifth tasks consist of maneuvering a loop and a barrel roll between gates, respectively. The sixth and final task requires reading data off of the instrument panel while performing 180-degree turns between gates. The weather conditions are simulated to be bright, clear, and sunny and are kept constant during all the tasks. Between each task, users fill out two questionnaires. The first one is the Virtual Reality Sickness Questionnaire which asks users if they experienced any VR symptoms, including General discomfort, Fatigue, Eyestrain, Difficulty focusing, Headache, Fulness of the head, Blurred vision, Dizziness, and Vertigo. The second questionnaire prompts users to rate how mentally demanding the task was on a scale of one to ten, a sub-item from the NASA-TLX questionnaire.

The VR headset used is the HP Reverb G2 Omnicept Edition since it integrates physiological sensors, such as Eye-tracking, Pupillometry, and Photoplethysmography. The Thrustmaster Warthog HOTAS controls the aircraft, and the Thrustmaster Pedals manipulate the rudder. A data-logging application runs parallel to Prepar3d and logs the following data received from the headset: Heart-rate, Heart-rate Variability, Eye gaze vectors, and Pupil dilation. Fixations and saccades can be extracted from the gaze vectors using the Velocity-Threshold Identification algorithm.